Now we can finally turn the Airflow on and configure the GCP connection. You can then create a new JSON key which will be download to your machine automatically.Īfter downloading the key, create a directory inside your dags directory and paste the key json file in it. To create a key, access the service accounts panel, click Manage Keys under Actions. Setup Google Cloud connection in Airflow UIīefore we setup the Google Cloud connection in Airflow UI, we need to generate a service account key from GCP to allow access from our local machine to the GCP. For BQ_PROJECT, it needs to be the project ID you’re using in BigQuery and BQ_DATASET would be the dataset name in BigQuery.Īfter configure those fields, let’s put the file into the dags directory. For BQ_CONN_ID, it is going to be the same id we put in Airflow UI later on. Within the DAG file, there are several things we need to configure including BQ_CONN_ID, BQ_PROJECT and BQ_DATASET. # Task 3: Check if inter data is written successfully QUALIFY ROW_NUMBER() OVER (PARTITION BY url ORDER BY full_description DESC) = 1ĭestination_dataset_table='.properties_inter'.format( ROW_NUMBER() OVER (PARTITION BY url ORDER BY full_description DESC) AS rnįROM `gary-yiu-001.testing_dataset.properties_raw` # Task 2: Run a query and store the result to another table

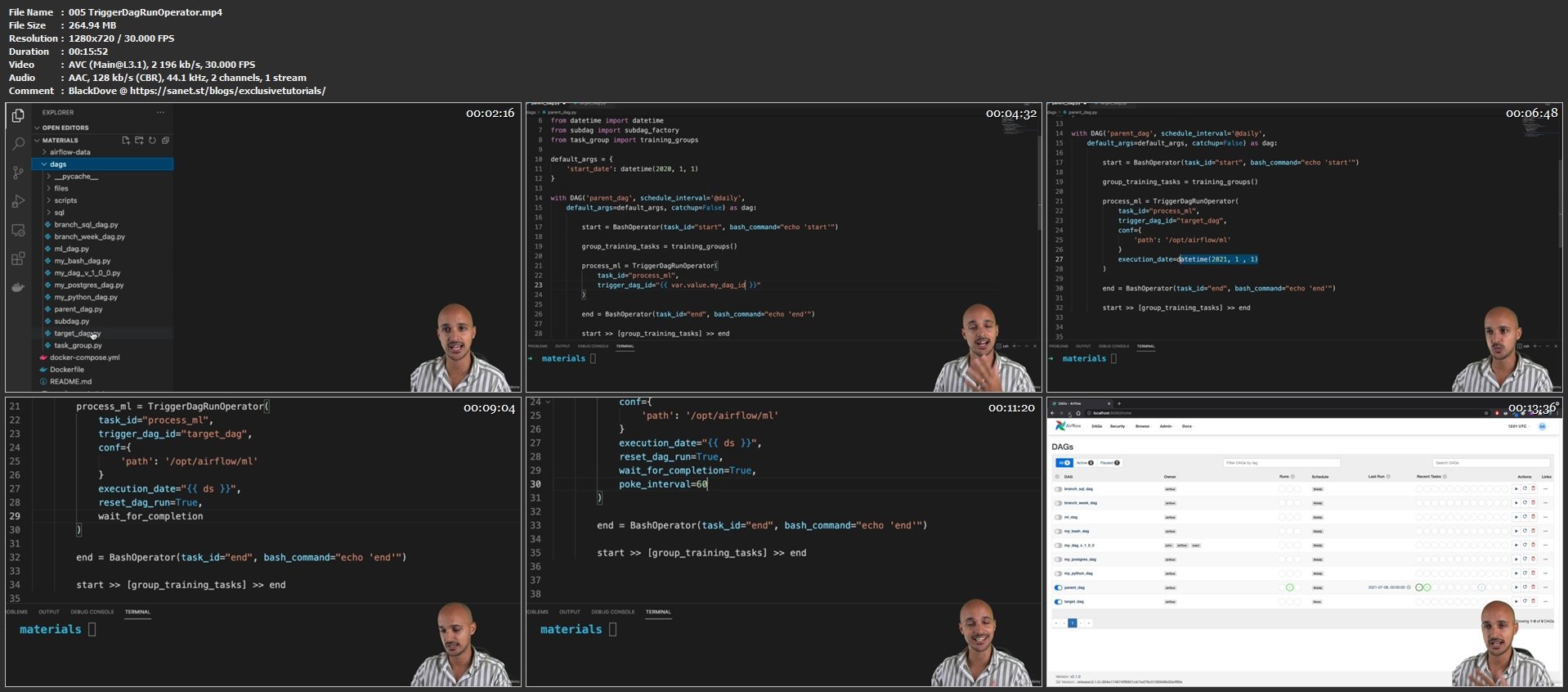

`gary-yiu-001.testing_dataset.properties_raw` # Task 1: check that the data table is existed in the dataset # Define DAG: Set ID and assign default args and schedule interval The following is the DAG we are going to use: import jsonįrom _operator import BigQueryOperatorįrom _check_operator import BigQuer圜heckOperator For how to construct a DAG, it will be covered in another post. Here I am using a simple DAG with just several BigQueryOperator to run some queries for demonstation purpose. Configure your DAG fileĪssuming you have a DAG for running some BigQuery tasks, we need to place the DAG file into the dags directory. Your file structure should look like this now. pluginsĮcho -e "AIRFLOW_UID=$(id -u)\nAIRFLOW_GID=0" >. There are several directories and user sertting required by the Docker so let’s configure them. This is the Docker file that will help to create the Airflow environment when you run the docker command. Use your terminal, fetch the docker-compose.yaml with the following command. Fetch docker-compose.yaml from AirflowĪfter installing docker, let’s create a working folder in your preferred location. It can be downloaded and installed here in Docker official site. Test run single task from the DAG in Airflow CLIįor Airflow to running locally in Docker, we need to install Docker Desktop, it comes with Docker Community Edition and Docker Compose which are two prerequisites to run Airflow with Docker.

Setup Google Cloud connection in Airflow UI.It involves the following 6 steps to set it up and we will go through it one by one:

I reference the tutorial on Youtube by Tuan Vu and using more recent version of Airflow to set it up locally. We can setup Airflow locally relatively simple using Docker. Before we deploy new DAG to production, it’s best practice to test it out locally to spot any coding error.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed